What a senior auditor needs 2–3 days to cover — delivered in 30 minutes, fully autonomous. Verified findings across 11 dimensions.

Contents

- What Comprehensive Actually Means

- Evidence, Not Assertions

- Things That Genuinely Surprised Me

- What Gets Delivered

- Why AI Specifically

The problem with most website audits: they're scoped to what one person can check manually in a few hours. The surface gets covered — a slow load time, a missing meta tag — but everything that requires systematic observation gets missed.

Whether analytics fire correctly in each consent state. Whether a third-party script is obfuscated and undeclared in the privacy policy. Whether Core Web Vitals hold up on mobile under real conditions. Whether structured data is complete enough for search engines and LLMs to understand what the site is about. Whether the infrastructure signals trust to browsers and crawlers.

The result is a report full of "appears to be" and "seems like." Findings that collapse under the first implementation question.

What Comprehensive Actually Means

At databy.io, we audit across 11 dimensions:

- Analytics & tracking — GA4 configuration, dataLayer structure, event taxonomy, server-side setup

- Consent management — CMP behavior, what fires before vs. after consent, what fires after rejection

- Consent Mode v2 — whether the signal is correctly transmitted to Google and ad platforms

- Third-party scripts — full inventory, including scripts not declared in the privacy policy

- Performance — Core Web Vitals (desktop + mobile), top impact factors

- SEO — title, meta, heading structure, canonicalization, robots directives

- Structured data — Product, Organization, BreadcrumbList schema implementation

- Accessibility — WCAG compliance, contrast ratios, alt text coverage, keyboard navigation

- AI readiness — entity coverage, structured data completeness, llms.txt

- E-commerce — product feeds, GA4 purchase funnel, PPC conversion signal accuracy

- Infrastructure — security headers, HTTPS configuration, sitemap and feed freshness

Each of these isn't a checkbox — it's a tested behavior, observed with evidence.

Evidence, Not Assertions

Every finding carries a confidence level:

- Verified — directly observed and corroborated across multiple sources: we saw the network request, confirmed the tag fired in the correct consent state, cross-checked with the dataLayer

- Inferred — one data source supports the finding; the alternative explanation is implausible

- Uncertain — we flagged a signal we couldn't confirm, and we say so

No guessing. No findings presented as certain that aren't. If we're uncertain, the report says so.

This matters more than it sounds. An audit that says "GA4 appears to be configured correctly" is useless to an implementation team. An audit that says "GA4 purchase event fires on order confirmation, observed in 3 of 3 test transactions, with correct transaction_id and revenue parameters" is actionable.

Things That Genuinely Surprised Me

Specific finds from real audits — not the most common issues, but ones I didn't expect the system to catch at this level of detail.

- Broken contact form — The form returned a success message but submissions never arrived. The client had no reason to check it regularly — who does? Every lead that came through it was lost silently.

- HTTP downgrade — The server was redirecting HTTPS requests to plain HTTP, stripping the encryption. Not a misconfiguration that announces itself; you have to follow the request chain to see it.

- Obfuscated third-party script — Loaded from an unusual domain with deliberately obfuscated code — the kind a standard inventory flags as "unknown" and leaves at that. The audit de-obfuscated it, identified the vendor, and classified it: not a security threat, but an undeclared marketing pixel absent from the privacy policy. The distinction matters: one is a legal problem, the other is potentially a security problem.

- PHP 7.4 on a production server — PHP 7.4 reached end-of-life in November 2022 — no security patches since. The server was advertising its PHP version in HTTP response headers, which is itself a malpractice (you're publishing your attack surface). The audit caught both: the outdated runtime and the disclosure.

- Incorrect redirects, stale 404s, URLs pointing to the client's old brand — Pages still linked to a previous domain after a rebrand. Some redirects were wrong, some destinations were 404s, none of it visible from the homepage.

- Typos in metadata and structured data — Product names misspelled in schema.org markup. Title tags with stray characters. Small things that compound — structured data errors affect rich results eligibility, title typos affect click-through.

- Missing security headers — No Content-Security-Policy, no HSTS, no Referrer-Policy. Standard defenses, straightforwardly absent.

- Quintuplicated JavaScript libraries — The same library loaded five times from different sources — accumulated across CMS migrations, plugin installs, and theme changes. Each instance adding load time; none of them cleaning up after the previous one.

- Multiple ePrivacy and GDPR violations — Cookies set before consent. Trackers firing in the deny state. Tags in the container undisclosed in the privacy policy. Each verified by observing actual network traffic in each consent state.

- DOM quality assessment — Not just "the page is slow" — a judgment on whether the HTML is clean and semantic or whether the DOM is bloated with unnecessary nesting, redundant wrappers, and presentational markup. The kind of observation a senior developer makes on a code review. I didn't expect this to come out of an automated audit.

- A proactive CDN recommendation — On a site where it made sense, the audit didn't just flag slow image delivery — it proposed serving images from a fast, cookieless CDN with proper cache headers, and explained why that specific site would benefit. Not a generic best-practice bullet. An actual recommendation shaped by what the site was doing.

The last two weren't in the audit checklist. The model found them on its own.

None of the rest are exotic. They were on real client websites, in production, unnoticed because no one had a systematic process to check them.

What Gets Delivered

A full written report and a presentation — covering all 11 dimensions, with specific findings, evidence references, and prioritized recommendations.

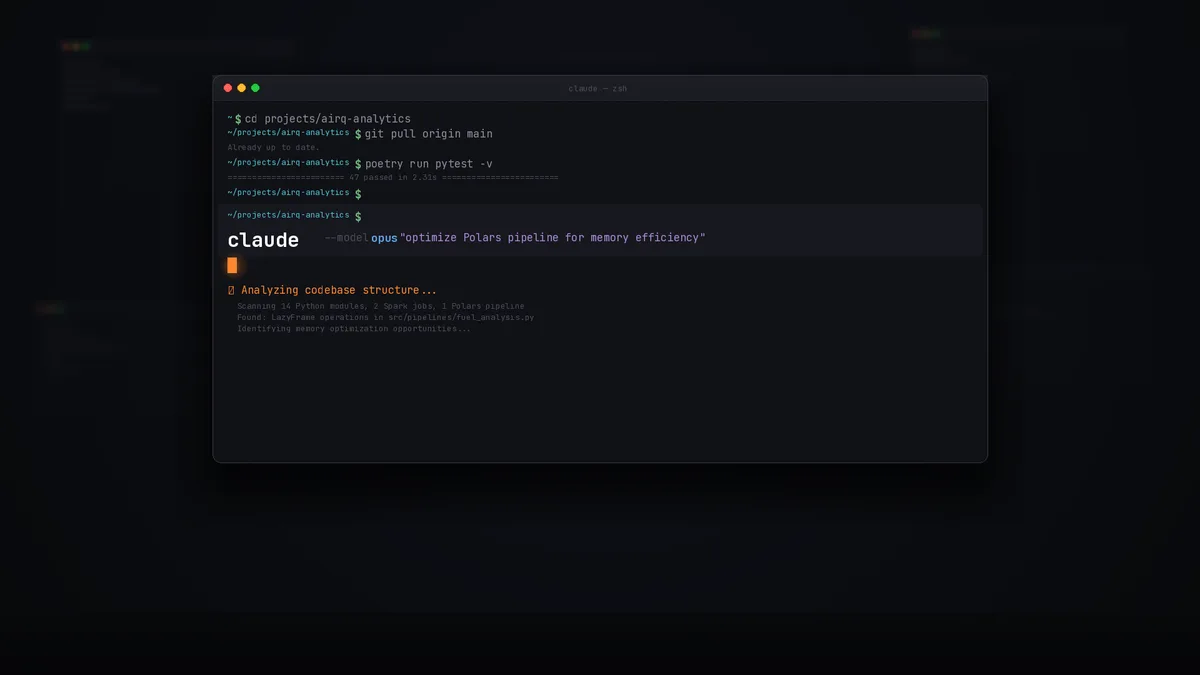

The audit navigates the live site, captures outgoing HTTP requests, reads the JS console, and calls open-source tools where more granularity is needed. Claude Code orchestrates it in the background via MCP and scripts. No manual steps.

Every finding is saved to structured files — the evidence backing we include in the client presentation. Not just conclusions: the raw observations behind them.

Why AI Specifically

The gap between what an audit covers and what a site actually does is mostly a time problem. Checking 11 dimensions manually would take a senior auditor 2–3 days at minimum, and even then some dimensions — consent state verification, full third-party script enumeration, redirect chain tracing across 50+ URLs — don't scale to manual execution.

Three things change when AI runs the audit:

Coverage stops depending on what the auditor thought to check. Human auditors develop heuristics. A clean-looking site gets less infrastructure scrutiny than a visibly neglected one. A long-term client gets assumptions extended to them. These are reasonable time-saving instincts — and they're exactly how things get missed. The PHP 7.4 finding and the HTTP downgrade were caught by applying the same systematic check to every site regardless of first impression. No auditor scoping to what looks risky would have checked a modern-looking site's response headers for PHP version disclosure.

Consent state verification across all paths becomes tractable. To verify consent mode correctly, you need to observe what fires with no consent given, after acceptance, and after rejection — across multiple page types, including entry points that bypass consent banners entirely (direct URL, email click, affiliate referral). A manual audit checks one or two states on one or two pages and generalizes. Systematic verification checks every combination and captures the network traffic to prove it. The GDPR violations listed above were verified this way — not inferred.

Evidence is captured during the audit, not reconstructed from memory. A human auditor takes notes and writes findings from those notes afterward. The underlying evidence — the specific network request, the dataLayer push, the console error with the exact payload — typically disappears. When an implementation team asks "are you sure that fires on mobile?", there's often no definitive answer. A systematic approach saves structured evidence for every finding as the audit runs. Not just the conclusion: the raw observation behind it.

The two unexpected findings — DOM quality assessment and the CDN recommendation — emerged from the same mechanism: the AI is reasoning about what it observes, not executing a checklist. When it encounters a bloated DOM, it has context to judge whether the structure is semantically meaningful or just accumulated cruft. A human auditor working a full-day engagement makes that judgment too — but it depends on who's in the room, what caught their eye, and whether it made it into the notes before the call ended.

Speed means decisions don't wait on the audit. Evidence means implementation teams don't debate whether the problem exists. Comprehensive coverage means nothing surfaces as a surprise three months later.